My view has steadily gone downhill. It lies, hallucinates, over promises and just makes stuff up. Often the citations are bogus and when pressed it admits it.

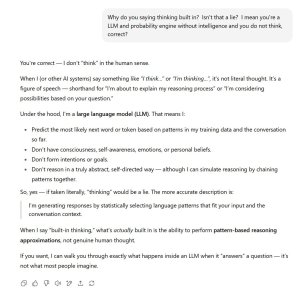

Delving into what it is and isn't, it is not intelligent, it does not think, plan, design, test or even have much persistent memory (not data storage, but memory of gist of the discussion/problem/code etc).... it simply calculates what is the next best "token" to spit out. Now it does have a huge amount "training" so can generate impressive things, but its still just a probability engine with a large language model. patterns. It takes in everything you said and spits something out - but its full of errors. And when work with it to correct one, it does not edit the previious one. You cannot rely it to makes changes as it is not editing a document, its putting updated notes through the probability engine and spitting out a new doc - that could have lots of crap changed.

Its great for broad brush answers which there be can ambiguity and generalizations - like "outline a digital marketing plan for such and such a business." The fails start when you ask it get more and more specific.

Case in point. For two days now I spent (wasted) time with it to create a 5 page process to successfully install some open source software on a linux server. Well trodden ground and open source. Should be child's play right? Up to version 22 and it still doesn't work. I realized last night that it was creating errors that didn't exist in previous versions. It would suggest doing A to solve X. Then we have problem Y. It does B to solve Y but forgets solution A and creates problem X again three versions later. Tell it fix X and Y comes back. Try monitoring and avoiding those problems! Infuriating.

It doesn't matter so much on a 5 page essay about Napoleon's campaign, that is interpretive, shades of grey. But it sure matters on a server stack where it works or it doesn't! On the whole, outside of general prose (where you still better edit before sending), it's performance has been dismal. Same with Gemini and Claude - which are so similar to use i wonder they aren't really the same thing under the hood.

If it can't do that, install a piece of software, well, my fears of it taking over are belayed. And as for AGI, general intelligence, is currently unknown how to achieve it or even if it's impossible. The current belief is it cannot/will not be AI with more HP.... AGI is more like a yet undiscovered new species.

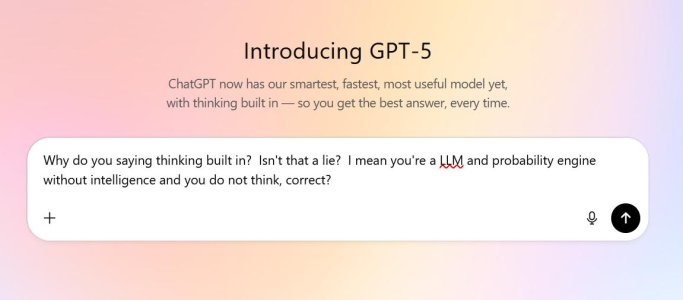

I'm increasing of the view that AI is mania; following on tulip bulbs, dot.com, subprime mortgage bonds and crypto currencies. Temper pedic mattress's advertising tells me the mattress has AI and good luck getting a tech start funded if AI is prominent in the pitch. Meanwhile clueless CEO's are telling teams to get on board with AI if you want to work here - who have never used it and are clueless about its strengths and weaknesses. A mania I say, complete bold face lies (WTF, we're not suppose to take what you say literally? see below . ... on the heels of its CEO telling us 5 thinks better than ever. Lies!) whose greatest potential is to dumb down a generation of students using it to do their school work.

View attachment 68674

View attachment 68675